View Media Group

Reframing property search as an AI decision assistant

Overview

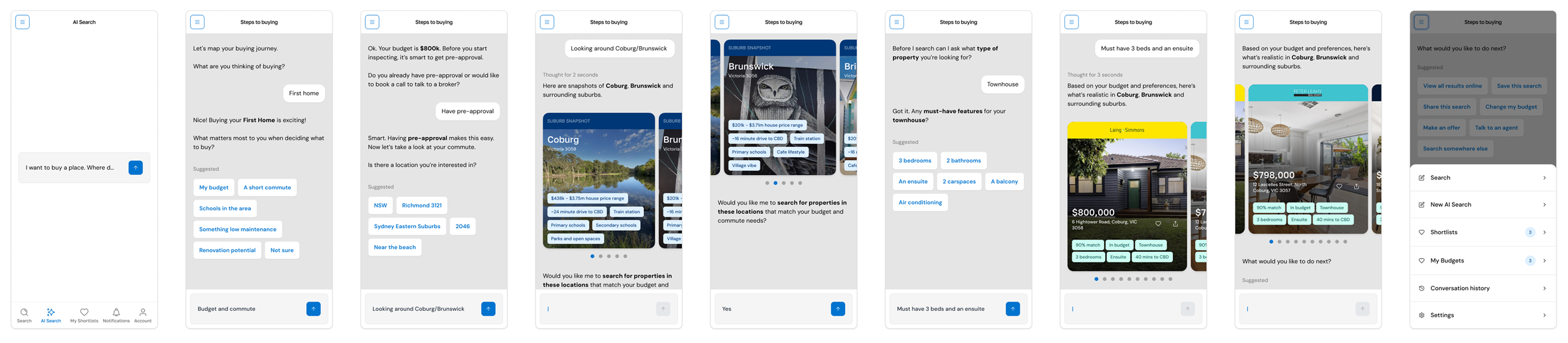

DeepView was a multi-phase product initiative at View Media Group exploring how AI could move property search beyond filters, listings and repeated query cycles into a more continuous, decision-oriented experience.

The work began pragmatically with the integration of an existing AI chat capability into view.com.au, then evolved into a custom AI search experience for limited market testing, and finally into the design of DeepView as a dedicated AI-first property product.

As Senior Product Designer, I worked across product strategy, UX, interaction design, information architecture, prototyping and AI-assisted design operations to help shape that progression..

The challenge

Traditional property platforms are built around inventory retrieval. They assume users know what they want, can express it through filters, and are prepared to jump between results, detail pages, finance tools and suburb research to build confidence.

But property decisions rarely work that way.

Intent usually starts vague and changes as people learn. Budget, location, commute, property type and lifestyle priorities shift in relation to one another. That made this less a search problem than a decision-support problem under uncertainty.

The opportunity was to test how AI could reduce friction, improve relevance and create a more adaptive property experience without losing trust or clarity.

Approach

The initiative evolved through three phases, each testing a different level of product maturity.

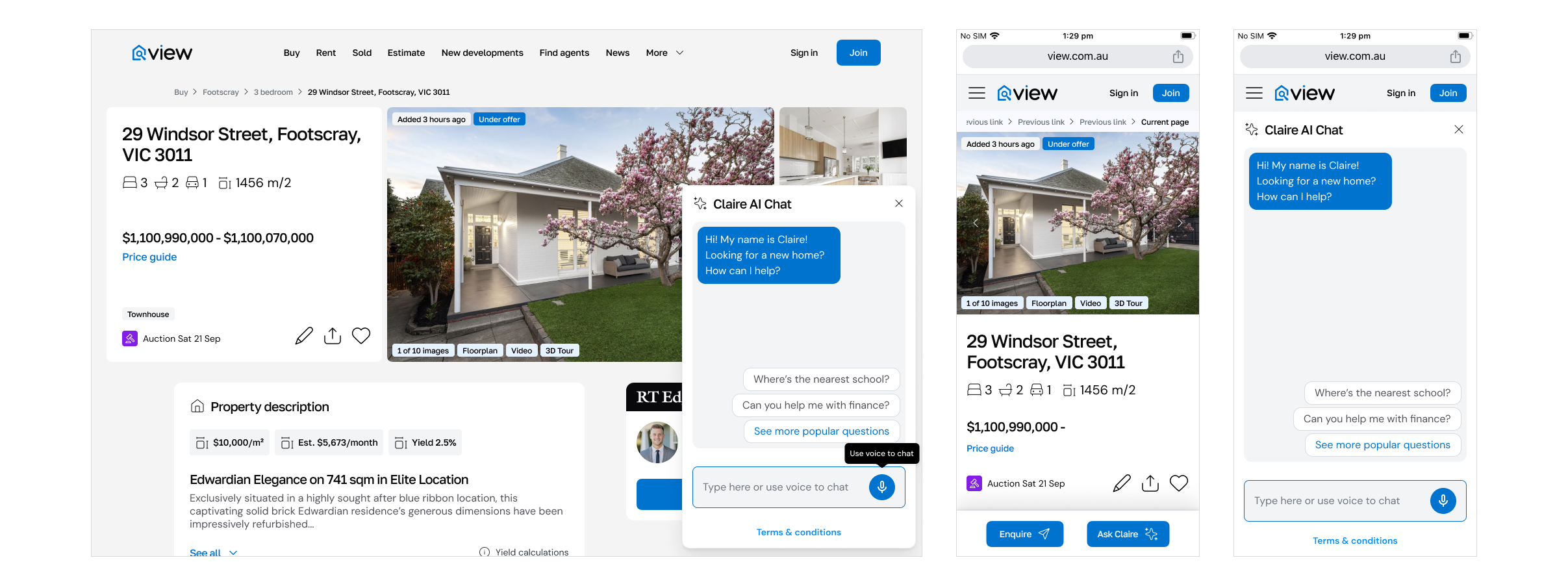

Phase 1 focused on integrating AI into the existing search experience with minimal disruption and learn quickly.

We integrated Propic’s AI chat tool, Claire, into the existing view.com.au search experience while Propic developed an LLM trained on View’s property-related data. This gave us a practical way to test conversational property assistance inside an established consumer product before committing to a new interaction model.

The design challenge at this stage was not to reinvent search. It was to understand how AI assistance could coexist with familiar property-browsing behaviour, where it added value, where it created friction, and what types of questions or support users were naturally seeking.

This phase established an early behavioural baseline and clarified the gap between bolted-on AI assistance and a more fully AI-shaped search experience.

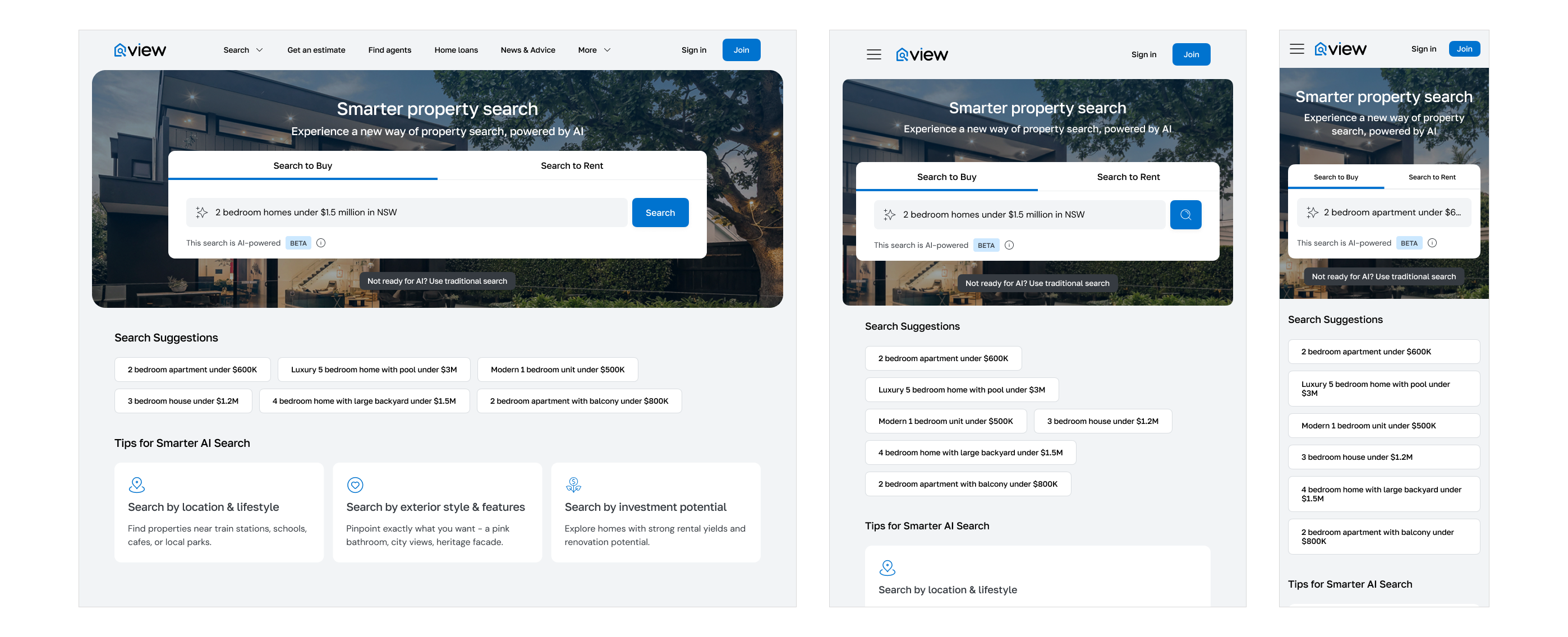

Phase 2 moved from integration to product design, designing and testing a custom AI search experience.

Once the initial learning was in place, the focus shifted to designing an AI search experience built specifically for View and used for limited testing in the Queensland market. This phase explored what happened when AI was treated not as an add-on, but as a first-class part of the search model.

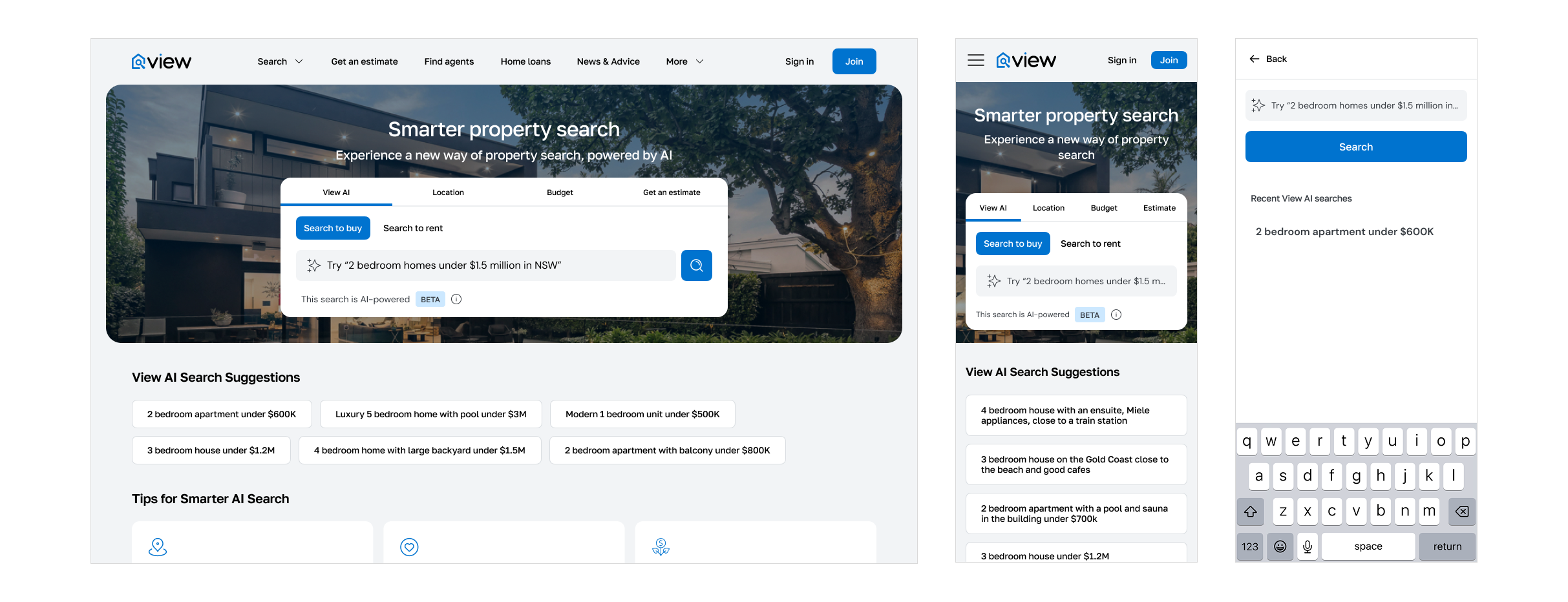

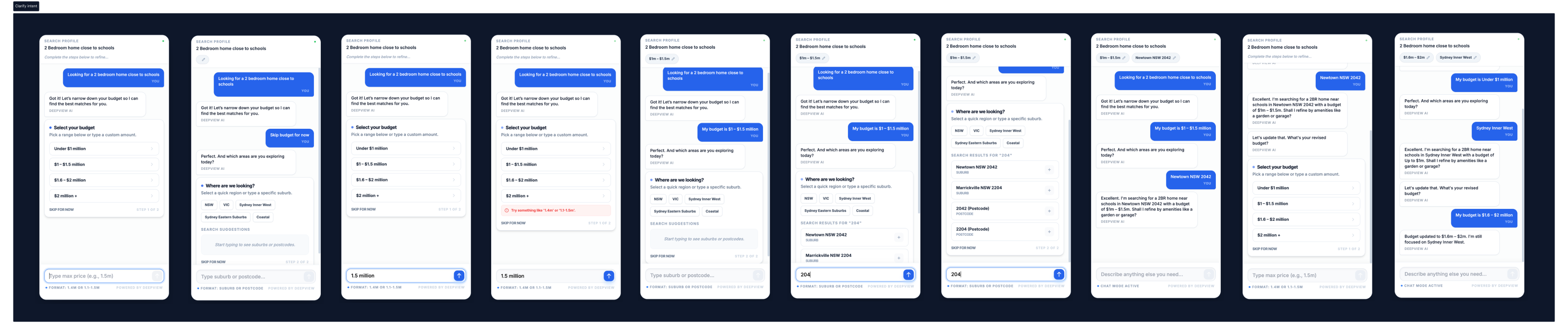

The first part of Phase 2 was an AI-search-focused microsite used to test search UI patterns in a more explicitly AI-mediated context.

This created room to experiment with interaction patterns, query framing, ranking logic, explainability and conversational guidance without the full weight of the main platform. It was an efficient way to test how far users would engage with AI-supported discovery when the experience itself signalled a different mode of searching.

The second part of Phase 2 brought that thinking back into the core product.

This involved redesigning the primary homepage search experience to integrate AI search alongside other specialised search types, and reworking the broader information architecture around search across both the website and the app. Rather than treating AI search as a novelty destination, the aim was to position it within a clearer overall search ecosystem.

This phase expanded the work from interface pattern design into navigation, IA and product framing. It also surfaced an important strategic question: whether AI search should remain one mode among several, or become the organising logic for a more unified experience.

Phase 3 took that question further, designing DeepView as an AI-first product

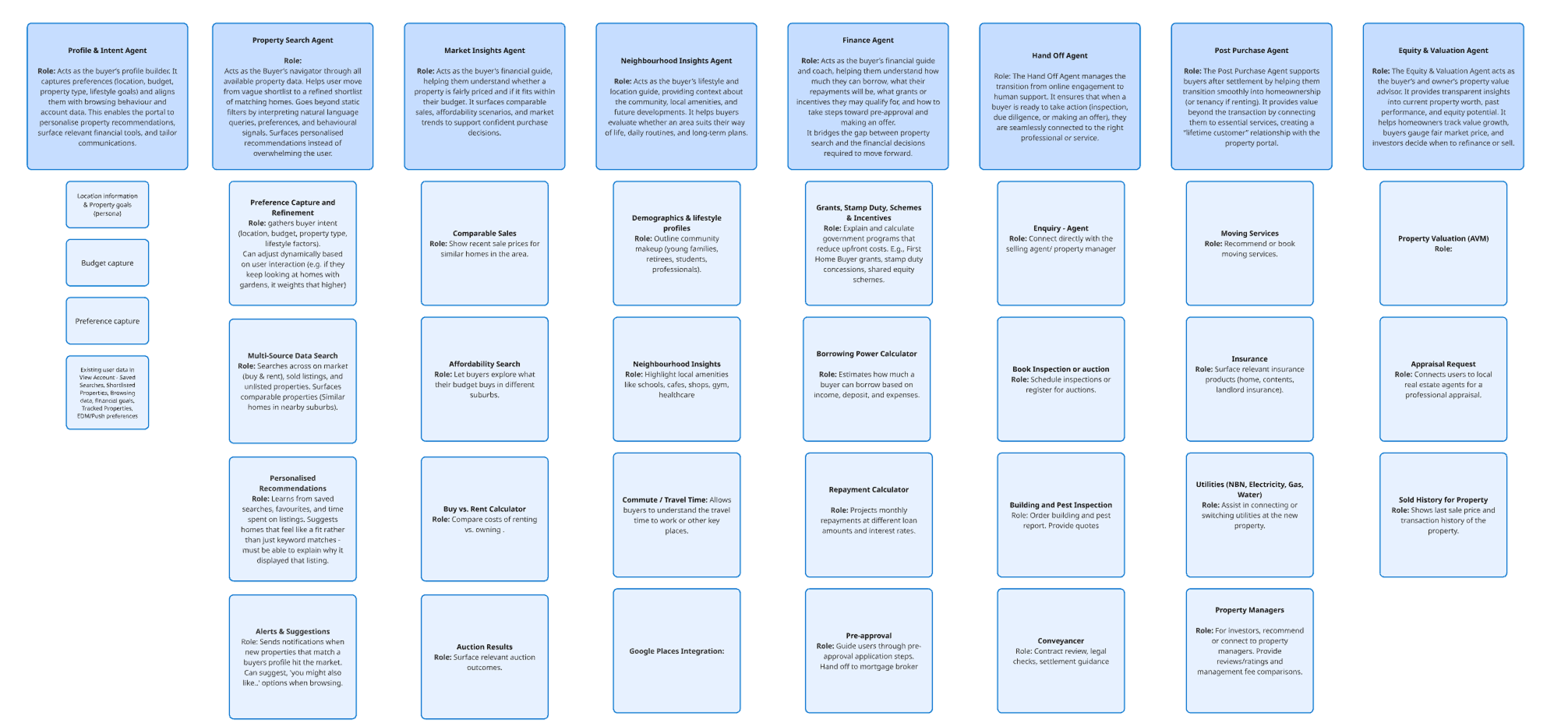

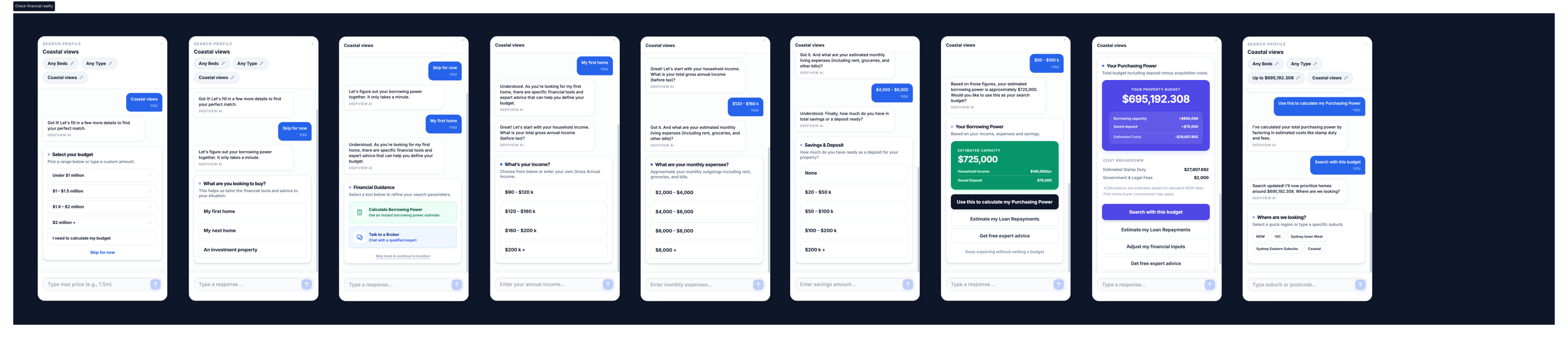

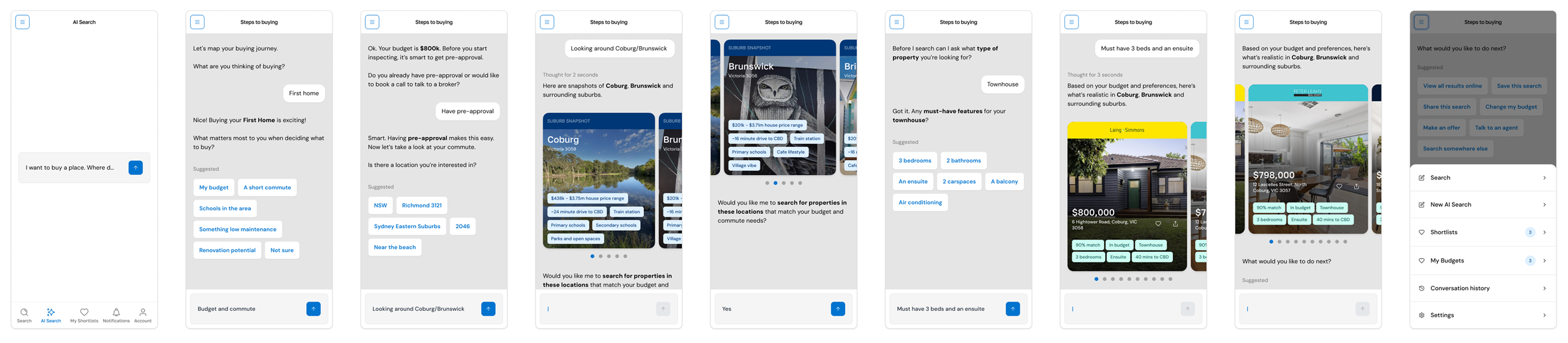

DeepView was designed as a dedicated AI-first property product: not a marketplace with chat attached, but a continuous decision-support experience centred on persistent user intent, visible memory, inline evidence and explainable system behaviour. The underlying concept was simple: one assistant, one flow, no reset states, and properties treated as decision evidence rather than inventory.

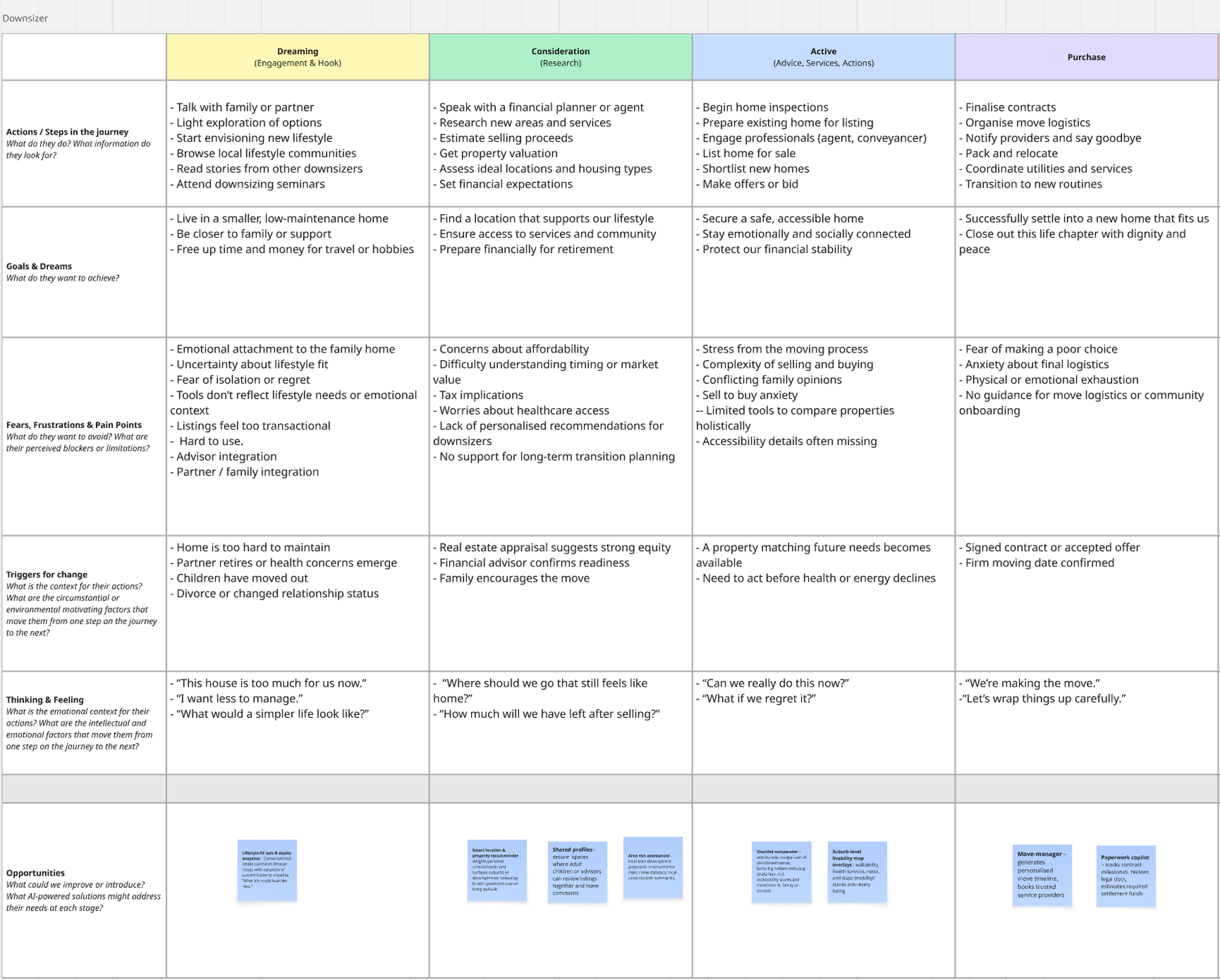

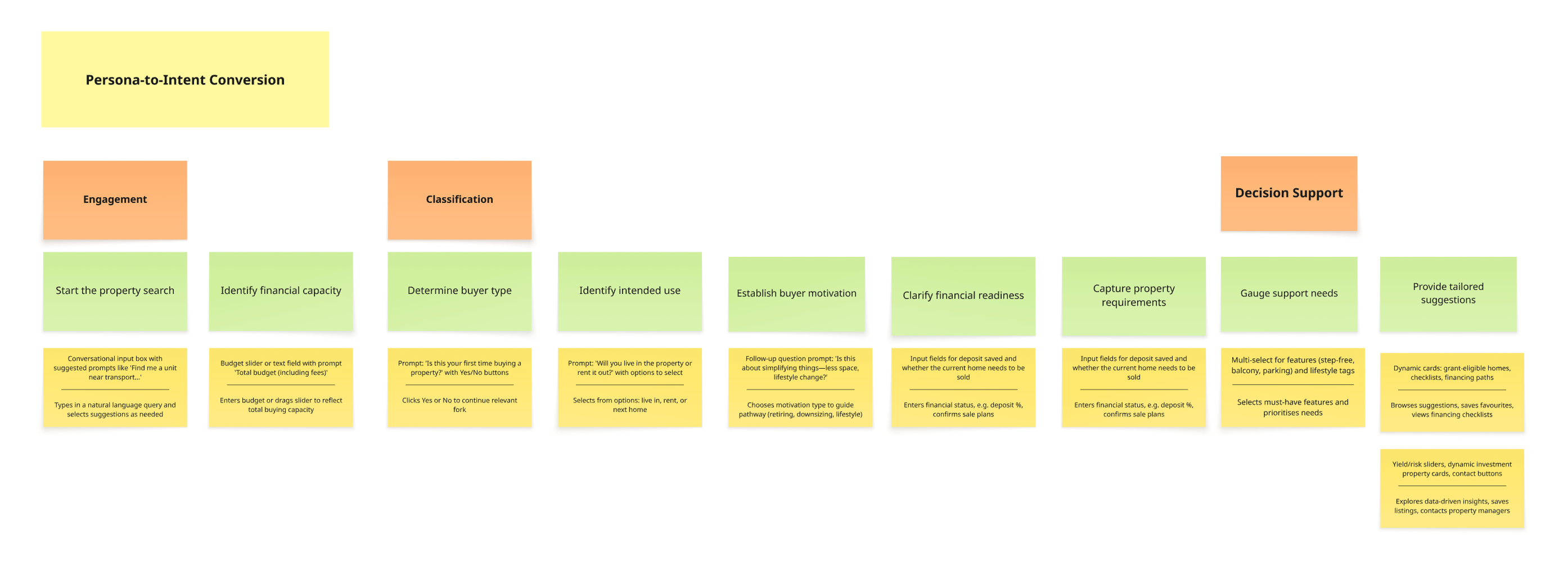

At inception, personas and user journeys were developed to help the engineering team understand the agentic workflows the product would require. That early framing work was important because the challenge was not just UI design. It was designing an experience that mapped to actual orchestration logic, reasoning steps and system behaviours.

Design progressed alongside the team’s demonstrations of those workflows. Seeing those practical flows take shape helped me refine, pressure-test and sometimes reconsider the design principles against what was technically viable, what was genuinely useful, and what level of explainability or continuity the product could realistically support.

The resulting interaction model centred on intent continuity. Users did not repeatedly start new searches, open separate threads or lose progress. Instead, they refined or replaced their intent within one evolving flow. A persistent Context Header / Memory Bar made the system’s understanding visible and editable. A Unified Context Stream brought prompts, listings, insights, actions and reasoning into one mobile-first interface. Listing cards were framed as evidence, not inventory. The Inline Evidence Stack replaced the traditional property detail page with deeper, contextual expansion. Explainability patterns made changes in ranking, assumptions and recommendations visible at the moment they occurred.

AI in the design workflow

AI was integrated into the workflow as well as the product concept.

I used ChatGPT, Claude via Figma Make, Gemini, Stitch and AI Studio across strategy, design, prototyping and documentation. These tools helped accelerate concept development, articulate system logic, test alternative interaction patterns, and generate more structured design artefacts for cross-functional discussion.

Early DeepView designs were built using the View Design System. As the work became more prototype-driven and I started integrating AI prototyping tools more directly into the workflow, I shifted to a Tailwind-based component library. That change gave me better interoperability with tools such as Figma Make, Codex and AI Studio, and made it easier to move between concept design, coded prototypes and structured documentation.

That shift was less about aesthetics than workflow efficiency. It allowed the design process to stay closer to the behaviour of the product being imagined: iterative, testable and easier to translate into working interfaces.

Learning from live AI search behaviour

As DeepView developed, we were also able to draw on data gathered from the earlier AI test on the website. That closed an important loop between experimentation and product design.

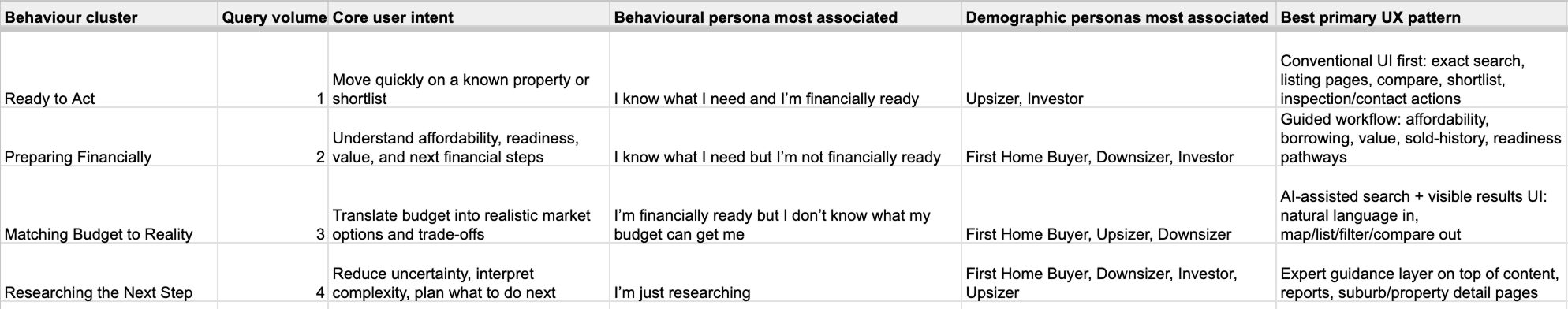

The data helped validate a number of the UI patterns we were already exploring for an AI-first search experience, particularly around conversational input, intent refinement and more guided forms of discovery. It also gave me a clearer understanding of user intent by surfacing unexpected themes in the kinds of queries people were making. That was useful not just at interface level, but at product-strategy level, because it showed that users were often expressing needs, constraints and trade-offs in ways that sat outside conventional filter logic.

One of the most valuable outcomes was that it helped me rethink the persona model for the product. Rather than framing personas primarily in demographic terms, I began to redefine them behaviourally and through a lens of financial readiness. That created a more useful foundation for designing AI-supported journeys, because it aligned the experience more closely to how people actually approach property decisions: not just by who they are, but by how ready they are, what trade-offs they are managing, and how confidently they can act.

Outcome

Across the three phases, the work created a clearer path from experimentation to product strategy.

Phase 1 validated conversational assistance inside an existing property experience.

Phase 2 tested more deliberate AI-search patterns and clarified how AI should sit within the wider search ecosystem.

Phase 3 reframed the opportunity at product level, resulting in DeepView: a more coherent vision for an AI-first property decision assistant built around continuity, explainability and evidence.

The intended impact was to reduce repeated input, lower cognitive load, improve user confidence and help people reach decisions faster through cumulative rather than fragmented interactions. In the DeepView deck, these are framed as desired MVP outcomes rather than shipped metrics.

Reflection

What made this work interesting was not just the use of AI, but the progression in how AI was applied.

The initiative moved from embedded assistance, to AI-shaped search, to a more fully rethought product model. That progression made it possible to test value incrementally while steadily reframing the problem.

For me, the most important insight was that AI was most useful when it supported continuity, clarity and decision-making rather than simply generating answers. The strongest ideas in DeepView came from treating property search as an evolving intent problem and designing both the product and the workflow around that reality.